Pactto / Web App

Collaboration that doesn't end when the call does.

Pactto is a canvas-based video production workspace/conferencing tool. I helped design a suite of commenting tools to bridge the gap between live meetings and async work.

Role: Product Design Intern

Timeline: 4-Months

Team: 5 Designers

Too Long Didn't Read

Pactto is a canvas-based platform designed to centralize the video production lifecycle. My role was to eliminate the context gap between live and async work by introducing a system that anchors feedback directly to the asset. This replaced scattered Slack messages with precise, timeline-based comments and tasks that persist long after the meeting ends.

Success Metrics

~65% Less Tool Switching

~ 40% Faster Reviews

3 Methods of Commenting

context

Too Many Tools

The video editing workflow is fragmented. Teams are forced to juggle separate tools for feedback, tasks, and communication, losing critical context with every switch.

Enter Pactto: One Room, Full Story

Pactto unifies live conferencing and the entire video editing workflow into a single, canvas-based "Room." It replaces scattered tools with one intelligent space where feedback, decisions, and production happen together.

research

Validating the Friction

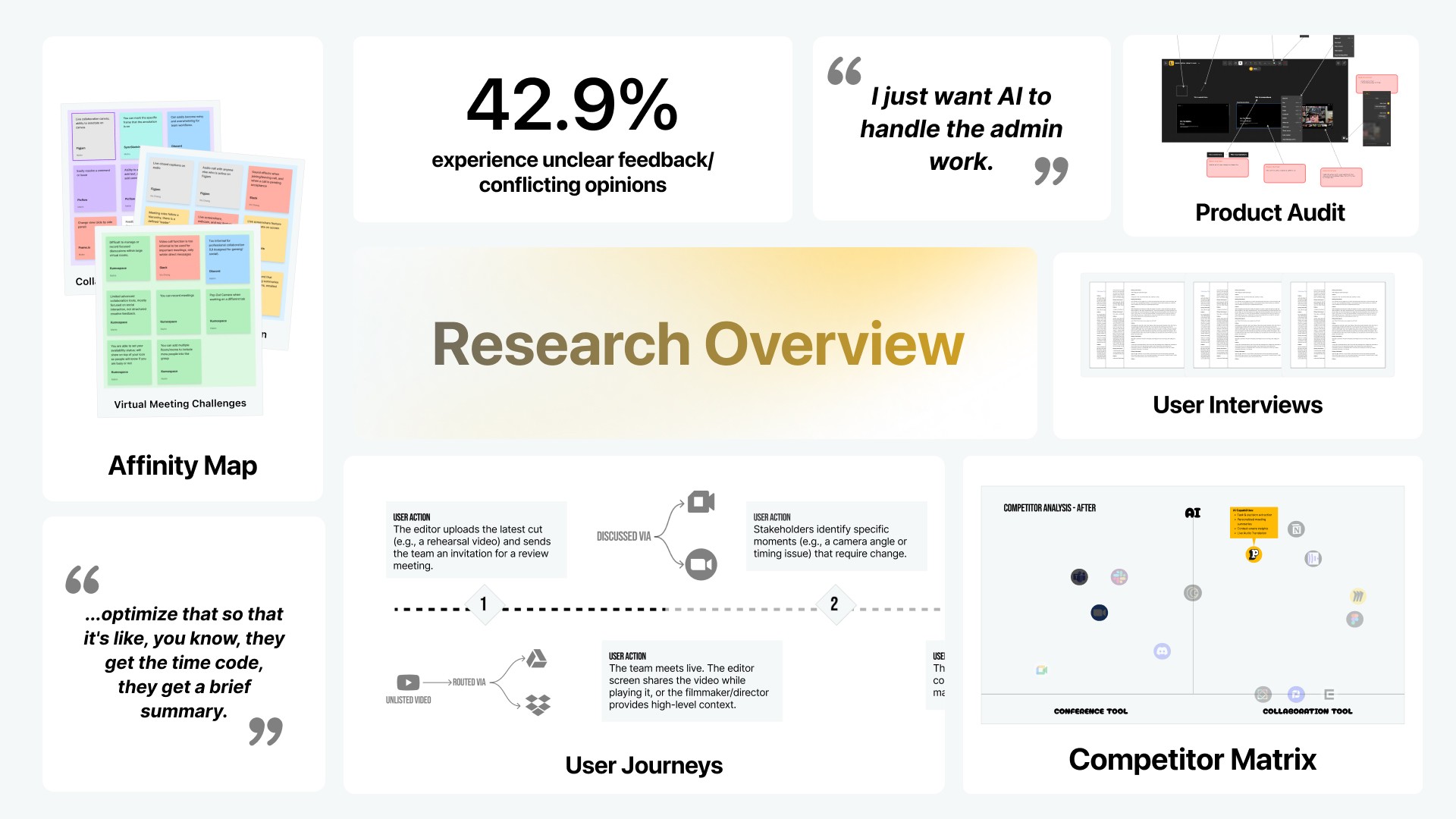

We synthesized our research into an affinity map. While we uncovered several friction points, we prioritized one key insight to tackle first.

Deep Dive: Is Pactto a conferencing or collaboration tool?

The Problem We Focused On

We synthesized our research into an affinity map, where one key insight stood out as the root cause of the fragmentation.

Key Insight: The "Async Gap"

With 42.9% of users blocked by unclear feedback, we identified a critical flaw: Pactto was purely synchronous. If a stakeholder joined late or missed a meeting, the context would be missing, forcing them to manually chase colleagues via Email or Slack just to catch up. We needed a Contextual Library to ensure that feedback persists on the asset, not just in the moment.

User Insights: Beyond the Async Gap

How Might We

preserve feedback context in async video reviews so decisions stay clear, traceable, and tied to the work?

This question guided the design of our core feature: a unified intelligent canvas where feedback organizes itself.

design process

We took an Iterative Approach

We followed a classic iterative design approach, testing and updating our prototypes on a rigorous weekly cycle to ensure constant validation.

Accelerating Prototyping with AI

Our stakeholder cycle required us to present functional features every week. Static mocks couldn't capture the complexity of video scrubbing or interaction timing, so we inverted the traditional workflow.

Instead of perfecting pixels first, we used Google AI Studio to "vibe code" functional prototypes.

1

The Strategy

We built rough, interactive web prototypes in code to validate the feel of the interactions (like drag-to-comment) during weekly reviews.

2

The Benefit

This allowed us to iterate on complex mechanics in real-time. Once an interaction was validated in code, we moved to Figma to codify the visual specs for the final Design System.

3

The Handoff

Engineers received a dual handoff: the Code Prototype for exact behavior and the Figma Design System for exact visuals.

solution

Designing for Expressive Feedback

Defining the MVP Together

To filter our ideas into a shipping product, we conducted group reviews in Google Sheets. We weighed Priority against Effort (Design & Eng) to decide exactly which features were critical for the Open Beta and which could wait.

Prioritizing Async Collaboration

Research confirmed the "Async Gap" was a critical failure point. With the live tools already stable, we prioritized the Commenting Suite as our absolute P0, building a persistent feedback layer to finally sit alongside the real-time workflow.

Rejected Idea: The Collapsible Sidebar Proposal

Foundations: Contextual Commenting

We designed three distinct commenting primitives to handle every type of creative feedback:

Pin Comments (Spatial)

Allows users to anchor feedback to a precise coordinate on the video frame. By clicking exactly where the issue is, reviewers eliminate ambiguity for specific visual edits, ensuring the editor knows exactly which pixel needs attention.

Drag Comments (Area)

Enables users to highlight specific regions or group elements within the frame. By drawing a bounding box around the focus area, reviewers can clearly define the scope of visual changes without needing to describe the location verbally.

Timeline Comments (Temporal)

Permits feedback to span a specific duration rather than a single static frame. This allows reviewers to critique temporal elements like pacing, audio syncing, or action sequences by marking a start and end point directly on the scrubber.

Design Constraint: Unified Interaction

Give users the ability to comment in different ways for different needs using distinct tools, but access them through a Single Interaction Point to minimize cognitive load.

Design Decision: The "Comment vs. Task" Friction

The Crossroads (Simplicity vs. Accountability): We faced a strategic choice: follow the industry standard of generic comments (like Figma) or build for strict accountability. Our research showed that for video editors, generic simplicity created paralysis leaving them unable to distinguish between a casual "maybe" and a critical "must-do" within a flood of feedback.

Unified Activity Panel

Unified Activity Panel

We bridged the gap by placing a lightweight "Task" toggle directly inside the comment box. This allows "Chat" and "Work" to coexist in one stream, giving editors the freedom to discuss ideas loosely or filter the feed to reveal a prioritized checklist of mandatory action items.

Design Decision Trade-Off

Design Decision Trade-Off

By adding the toggle, we introduced a micro-moment of friction, the user now has to make a choice ("Is this a task?"). However, we accepted this slight increase in cognitive load at input to drastically reduce the cognitive load at review. We sacrificed a minimal UI to gain absolute accountability.

Pactto Intelligence: Smart Voice

While we developed a suite of AI tools, I want to highlight Smart Voice, the feature that solves the conflict between speed and documentation.

Smart Voice

Smart Voice

Automatically transcribes spoken feedback and anchors it to the video timeline. By capturing the nuance of voice notes, reviewers can provide detailed context without typing, while editors receive precise, actionable text synced to the exact moment.

Design Constraint: Integrated AI

When designing AI, we required Baked-In Intelligence to avoid disconnected "AI Islands." Smart tools needed to live inside the flow so assistance appears exactly where the user is working, not in a separate tab.

developer handoff

The Handoff: From Prototype to Production

The System: We expanded Pactto’s existing UI into a modular Design System (Typography, Color, Components) to ensure consistency.

The Logic: We mapped every user flow to its corresponding "Vibe Coded" prototype, giving engineers a reference for exact animation behaviors.

The Priority: Crucially, we tagged every feature with MVP Priority Levels, clearly distinguishing "Open Beta Must-Haves" from "Fast-Follows" to prevent scope creep.

takeaways

The Outcome

We successfully transformed a fragmented "Frankenstein" workflow into a unified, intelligent production environment. By delivering a production-ready Design System and prioritized MVP, we positioned Pactto to launch an Open Beta that reduces feedback loops from days to minutes.

~65% Less Tool Switching

~ 40% Faster Reviews

3 Methods of Commenting

Key Takeaway

1

What didn’t work?

Vibe Coding for Velocity

We allowed a P1 feature (AI meeting summaries) to block the release of our core Commenting System, costing us a two-week delay. Since the AI feature was ultimately cut from the Open Beta anyway, we learned that prioritizing enhancements over the core feedback loop only hinders essential validation.

2

What would I do differently?

AI Features Should Be Baked in

I would treat the core utility (Commenting) and the enhancements (AI Summaries) as separate releases. By shipping the essential async tools first, we could have validated the primary workflow immediately, rather than holding it hostage for a secondary feature.

Pactto / Web App

Collaboration that doesn't end when the call does.

Pactto is a canvas-based video production workspace/conferencing tool. I helped design a suite of commenting tools to bridge the gap between live meetings and async work.

Too Long Didn't Read

Pactto is a canvas-based platform designed to centralize the video production lifecycle. My role was to eliminate the context gap between live and async work by introducing a system that anchors feedback directly to the asset. This replaced scattered Slack messages with precise, timeline-based comments and tasks that persist long after the meeting ends.

Success Metrics

~65% Less Tool Switching

~ 40% Faster Reviews

3 Methods of Commenting

context

Too Many Tools

The video editing workflow is fragmented. Teams are forced to juggle separate tools for feedback, tasks, and communication, losing critical context with every switch.

Enter Pactto: One Room, Full Story

Pactto unifies live conferencing and the entire video editing workflow into a single, canvas-based "Room." It replaces scattered tools with one intelligent space where feedback, decisions, and production happen together.

research

Validating the Friction

We synthesized our research into an affinity map. While we uncovered several friction points, we prioritized one key insight to tackle first.

The Problem We Focused On

We synthesized our research into an affinity map, where one key insight stood out as the root cause of the fragmentation.

The Insight: The "Async Gap"

With 42.9% of users blocked by unclear feedback, we identified a critical flaw: Pactto was purely synchronous. If a stakeholder joined late or missed a meeting, the context would be missing, forcing them to manually chase colleagues via Email or Slack just to catch up. We needed a Contextual Library to ensure that feedback persists on the asset, not just in the moment.

How Might We

preserve feedback context in async video reviews so decisions stay clear, traceable, and tied to the work?

This question guided the design of our core feature: a unified intelligent canvas where feedback organizes itself.

design process

We took an Iterative Approach

We followed a classic iterative design approach, testing and updating our prototypes on a rigorous weekly cycle to ensure constant validation.

Accelerating Prototyping with AI

Our stakeholder cycle required us to present functional features every week. Static mocks couldn't capture the complexity of video scrubbing or interaction timing, so we inverted the traditional workflow.

Instead of perfecting pixels first, we used Google AI Studio to "vibe code" functional prototypes.

The Strategy

We built rough, interactive web prototypes in code to validate the feel of the interactions (like drag-to-comment) during weekly reviews.

The Benefit

This allowed us to iterate on complex mechanics in real-time. Once an interaction was validated in code, we moved to Figma to codify the visual specs for the final Design System.

The Handoff

Engineers received a dual handoff: the Code Prototype for exact behavior and the Figma Design System for exact visuals.

solution

Designing for Expressive Feedback

Defining the MVP Together

To filter our ideas into a shipping product, we conducted group reviews in Google Sheets. We weighed Priority against Effort (Design & Eng) to decide exactly which features were critical for the Open Beta and which could wait.

Prioritizing Async Collaboration

Research confirmed the "Async Gap" was a critical failure point. With the live tools already stable, we prioritized the Commenting Suite as our absolute P0, building a persistent feedback layer to finally sit alongside the real-time workflow.

Foundations: Contextual Commenting

We designed three distinct commenting primitives to handle every type of creative feedback:

Design Constraint: Unified Interaction

Give users the ability to comment in different ways for different needs using distinct tools, but access them through a Single Interaction Point to minimize cognitive load.

Pin Comments (Spatial)

Allows users to anchor feedback to a precise coordinate on the video frame. By clicking exactly where the issue is, reviewers eliminate ambiguity for specific visual edits, ensuring the editor knows exactly which pixel needs attention.

Drag Comments (Area)

Enables users to highlight specific regions or group elements within the frame. By drawing a bounding box around the focus area, reviewers can clearly define the scope of visual changes without needing to describe the location verbally.

Timeline Comments (Temporal)

Permits feedback to span a specific duration rather than a single static frame. This allows reviewers to critique temporal elements like pacing, audio syncing, or action sequences by marking a start and end point directly on the scrubber.

Design Decision: The "Comment vs. Task" Friction

The Crossroads (Simplicity vs. Accountability): We faced a strategic choice: follow the industry standard of generic comments (like Figma) or build for strict accountability. Our research showed that for video editors, generic simplicity created paralysis leaving them unable to distinguish between a casual "maybe" and a critical "must-do" within a flood of feedback.

Unified Activity Panel

We bridged the gap by placing a lightweight "Task" toggle directly inside the comment box. This allows "Chat" and "Work" to coexist in one stream, giving editors the freedom to discuss ideas loosely or filter the feed to reveal a prioritized checklist of mandatory action items.

Design Decision Trade-Off

By adding the toggle, we introduced a micro-moment of friction, the user now has to make a choice ("Is this a task?"). However, we accepted this slight increase in cognitive load at input to drastically reduce the cognitive load at review. We sacrificed a minimal UI to gain absolute accountability.

Pactto Intelligence: Smart Voice

While we developed a suite of AI tools, I want to highlight Smart Voice, the feature that solves the conflict between speed and documentation.

Smart Voice

Automatically transcribes spoken feedback and anchors it to the video timeline. By capturing the nuance of voice notes, reviewers can provide detailed context without typing, while editors receive precise, actionable text synced to the exact moment.

Design Constraint: Integrated AI

When designing AI, we required Baked-In Intelligence to avoid disconnected "AI Islands." Smart tools needed to live inside the flow so assistance appears exactly where the user is working, not in a separate tab.

developer handoff

The Handoff: From Prototype to Production

The System: We expanded Pactto’s existing UI into a modular Design System (Typography, Color, Components) to ensure consistency.

The Logic: We mapped every user flow to its corresponding "Vibe Coded" prototype, giving engineers a reference for exact animation behaviors.

The Priority: Crucially, we tagged every feature with MVP Priority Levels, clearly distinguishing "Open Beta Must-Haves" from "Fast-Follows" to prevent scope creep.

takeaways

The Outcome

We successfully transformed a fragmented "Frankenstein" workflow into a unified, intelligent production environment. By delivering a production-ready Design System and prioritized MVP, we positioned Pactto to launch an Open Beta that reduces feedback loops from days to minutes.

~65% Less Tool Switching

~ 40% Faster Reviews

3 Methods of Commenting

Key Takeaway

Vibe Coding for Velocity

We allowed a P1 feature (AI meeting summaries) to block the release of our core Commenting System, costing us a two-week delay. Since the AI feature was ultimately cut from the Open Beta anyway, we learned that prioritizing enhancements over the core feedback loop only hinders essential validation.

AI Features Should Be Baked in

I would treat the core utility (Commenting) and the enhancements (AI Summaries) as separate releases. By shipping the essential async tools first, we could have validated the primary workflow immediately, rather than holding it hostage for a secondary feature.